Web content providers also manipulated some attributes within the HTML source of a page in an attempt to rank well in search engines. Flawed data in meta tags, such as those that were not accurate, complete, or falsely attributes, created the potential for pages to be mischaracterized in irrelevant searches. Using metadata to index pages was found to be less than reliable, however, because the webmaster's choice of keywords in the meta tag could potentially be an inaccurate representation of the site's actual content. Meta tags provide a guide to each page's content. Įarly versions of search algorithms relied on webmaster-provided information such as the keyword meta tag or index files in engines like ALIWEB. Sullivan credits Bruce Clay as one of the first people to popularize the term. According to industry analyst Danny Sullivan, the phrase "search engine optimization" probably came into use in 1997. Website owners recognized the value of a high ranking and visibility in search engine results, creating an opportunity for both white hat and black hat SEO practitioners. All of this information is then placed into a scheduler for crawling at a later date.

A second program, known as an indexer, extracts information about the page, such as the words it contains, where they are located, and any weight for specific words, as well as all links the page contains. The process involves a search engine spider downloading a page and storing it on the search engine's own server. Initially, all webmasters only needed to submit the address of a page, or URL, to the various engines, which would send a web crawler to crawl that page, extract links to other pages from it, and return information found on the page to be indexed. Webmasters and content providers began optimizing websites for search engines in the mid-1990s, as the first search engines were cataloging the early Web. These visitors can then potentially be converted into customers. SEO is performed because a website will receive more visitors from a search engine when websites rank higher on the search engine results page (SERP). Unpaid traffic may originate from different kinds of searches, including image search, video search, academic search, news search, and industry-specific vertical search engines.Īs an Internet marketing strategy, SEO considers how search engines work, the computer-programmed algorithms that dictate search engine behavior, what people search for, the actual search terms or keywords typed into search engines, and which search engines are preferred by their targeted audience.

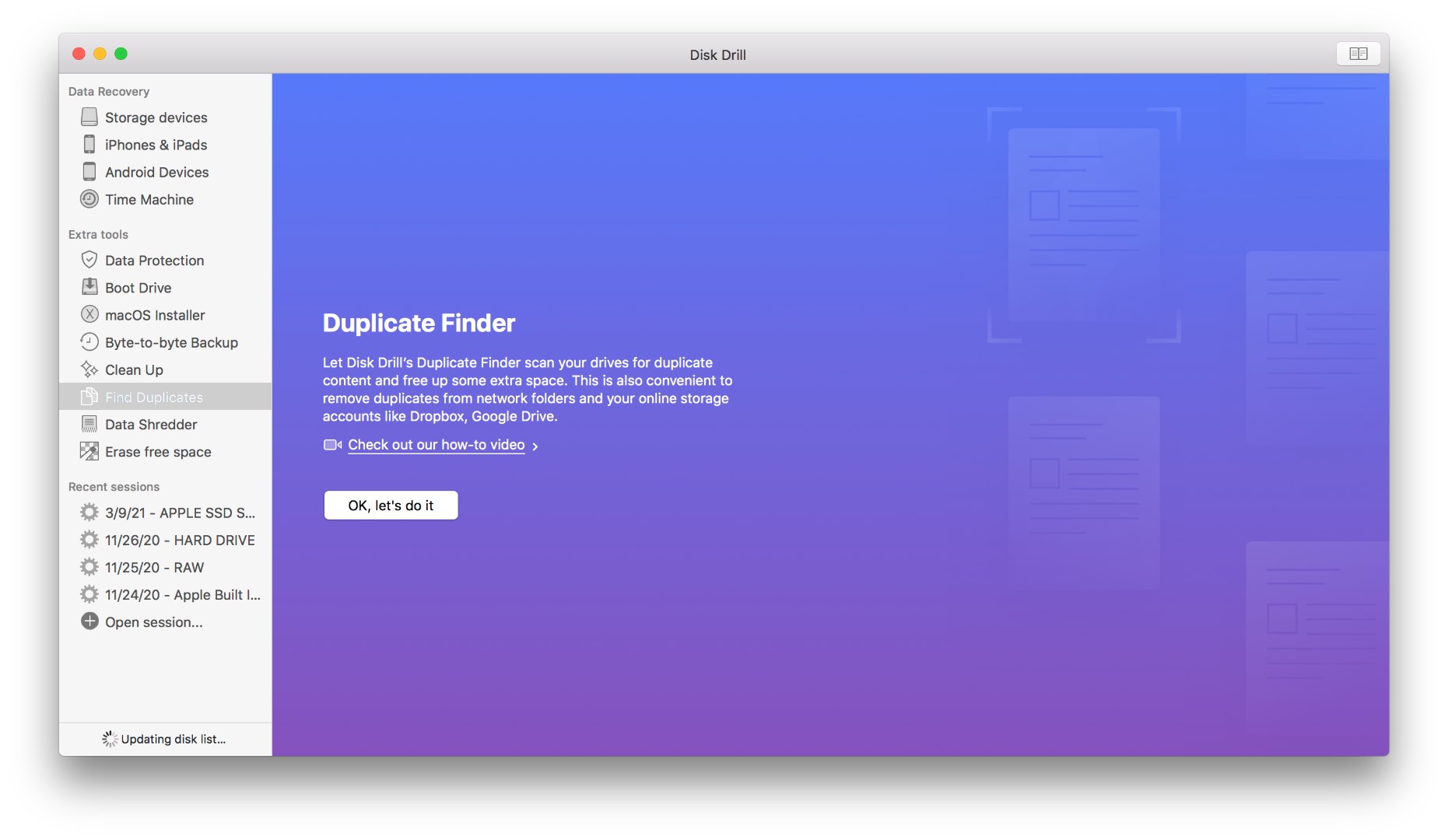

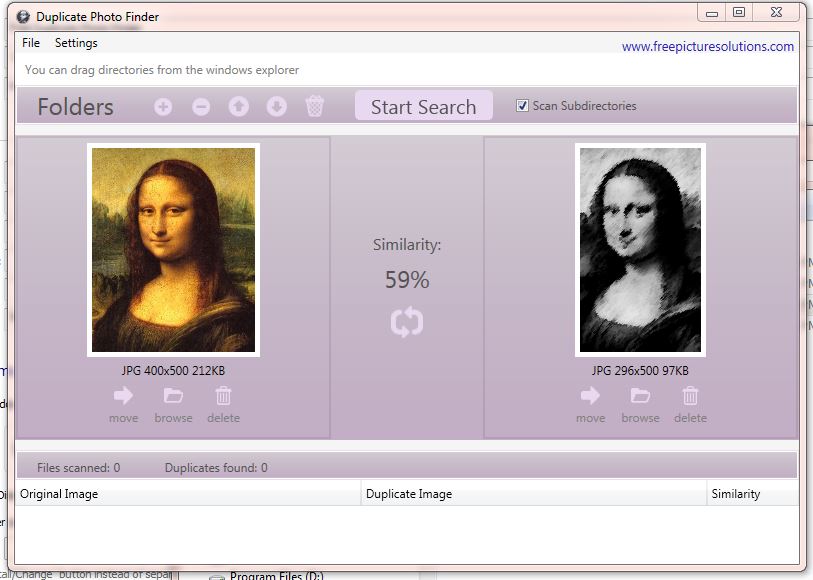

SEO targets unpaid traffic (known as "natural" or " organic" results) rather than direct traffic or paid traffic. Deleting many images can be extremely slow, as it tries to update the result count at every delete.Īll three of these tools find visual duplicates, not just files that are identical byte for byte.Search engine optimization ( SEO) is the process of improving the quality and quantity of website traffic to a website or a web page from search engines. It unfortunately does not provide an automatic selection mechanism with rational criteria, such as resolution, date or whatever, the automatic selection seems to just randomly just pick the first image found as the reference to preserve. Even then, it does not work perfectly at least for some kinds of images, like line-art. The "custom" level of similarity allows restricting pairings only to the highest degree of similarity, but it has to be set on Preferences as 99. For visual comparison of images, there are specific, non-default options on a drop-down menu. You can drop directories to add their contents recursively. Drag and drop image files do the duplicates window. In the menu, select File / Find duplicate. Pass all the images you want to compare on the command line. This will look for duplicates across your whole collection. In the menu, select Tools / Find duplicates.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed